En el corazón del aprendizaje automático se encuentra un concepto fundamental: los algoritmos. Estos conjuntos de instrucciones guían a las computadoras para realizar tareas, desde cálculos simples hasta operaciones complejas de resolución de problemas. Entender estos algoritmos puede ser desalentador, pero no temas. Este artículo desmitifica algunos de los algoritmos de aprendizaje automático más comunes, desglosando su esencia y aplicaciones.

Los Bloques Fundamentales: Entendiendo los Algoritmos

Un algoritmo es esencialmente una receta para resolver un problema. Comprende una serie finita de pasos, ejecutados en una secuencia específica, para lograr una tarea particular. Sin embargo, es crucial señalar que un algoritmo no es un programa o código completo; es la lógica subyacente a una solución para un problema.

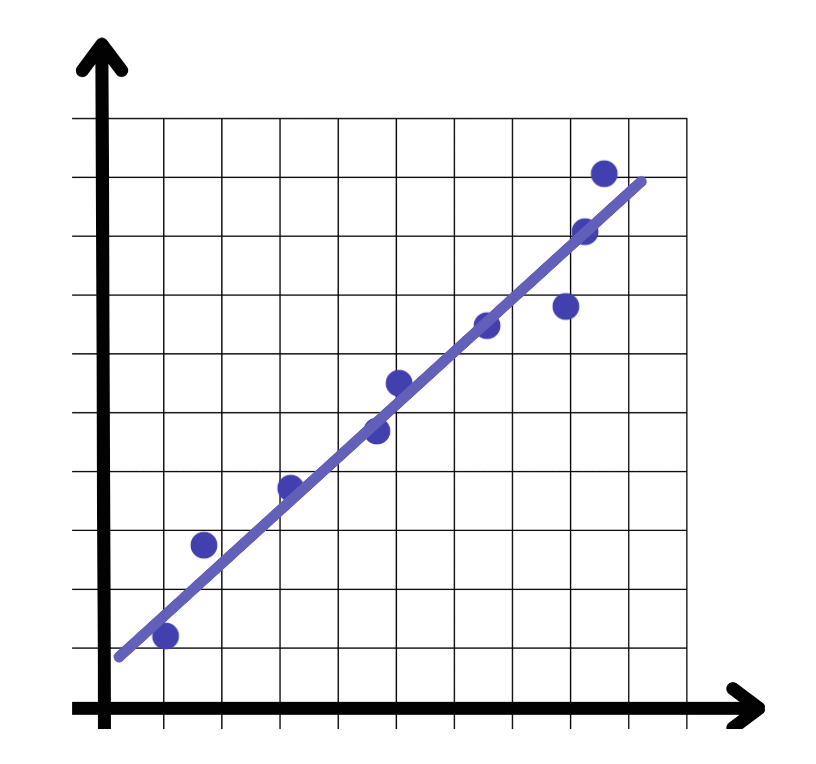

Regresión Lineal

La regresión lineal es un algoritmo de aprendizaje supervisado que sirve como un bloque fundamental en el aprendizaje automático. Busca modelar la relación entre una variable objetivo continua y uno o más predictores. Al ajustar una ecuación lineal a los datos observados, la regresión lineal ayuda a predecir resultados basados en nuevas entradas. Imagina intentar predecir los precios de las casas en función de su tamaño y ubicación; la regresión lineal permite esto al identificar la relación lineal entre estas variables.

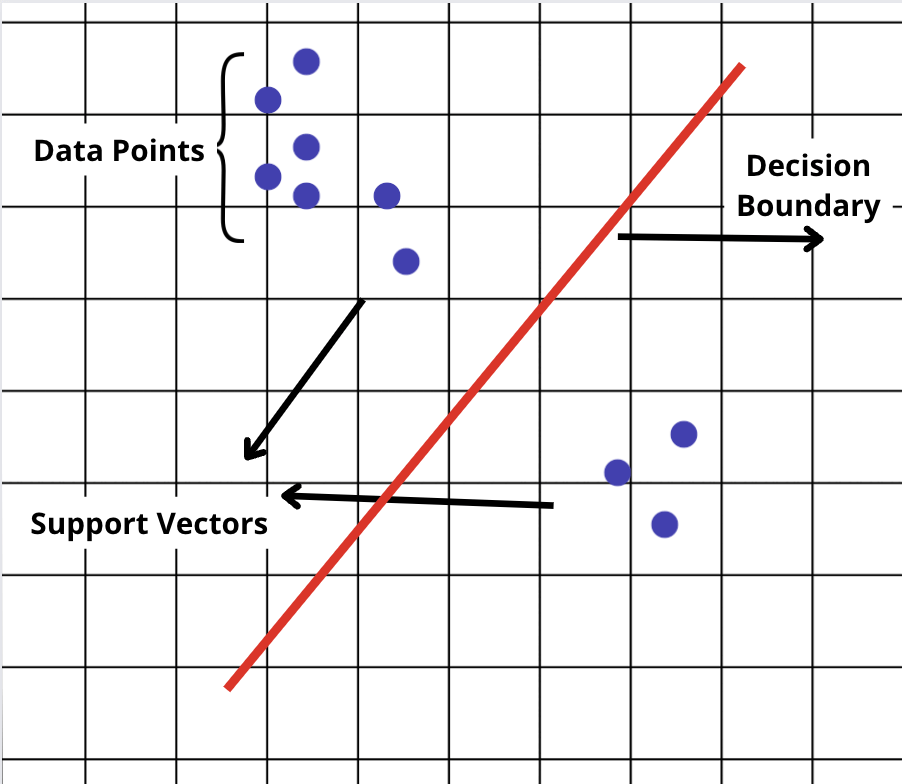

Máquinas de Vectores de Soporte (SVM)

SVM es otro algoritmo de aprendizaje supervisado, utilizado principalmente para tareas de clasificación. Distingue entre categorías al encontrar la frontera óptima—la frontera de decisión—que separa diferentes clases con el mayor margen posible. Esta capacidad hace que SVM sea particularmente útil en situaciones donde la distinción entre clases no es inmediatamente obvia.

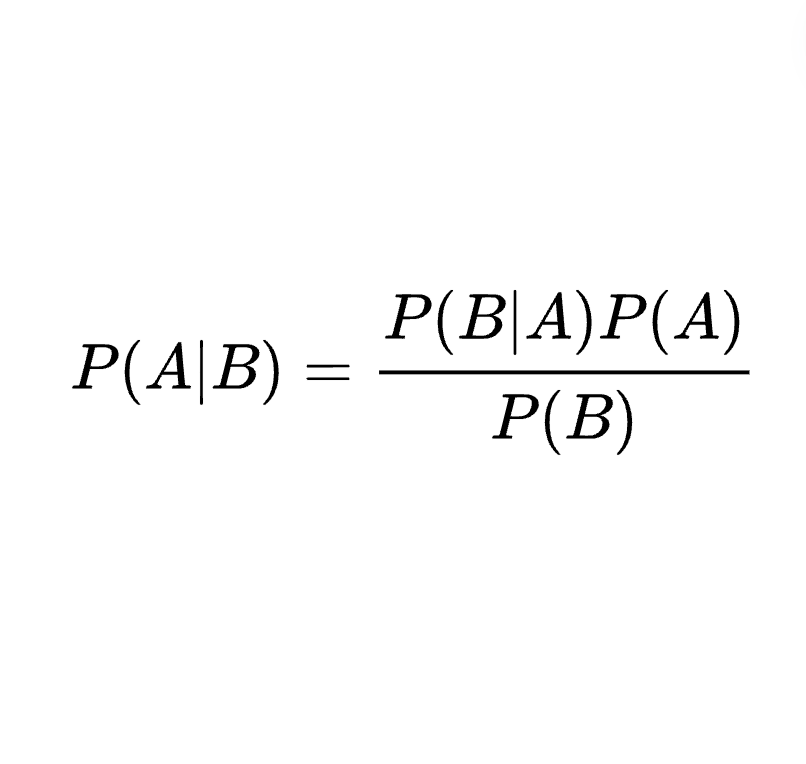

Naive Bayes

El clasificador Naive Bayes opera bajo una suposición simple: las características que analiza son independientes entre sí. A pesar de esta simplicidad, Naive Bayes puede ser increíblemente efectivo, especialmente en tareas de clasificación de texto como la detección de spam. Aplica el teorema de Bayes, actualizando la probabilidad de una hipótesis a medida que se dispone de más evidencia.

Regresión Logística

La regresión logística se utiliza ampliamente para problemas de clasificación binaria, situaciones en las que solo hay dos resultados posibles. Al aplicar la función logística (o sigmoide), transforma relaciones lineales en probabilidades, ofreciendo una herramienta poderosa para decisiones binarias. Ya sea para predecir la pérdida de clientes o identificar correos electrónicos no deseados, la regresión logística proporciona claridad en un mundo binario.

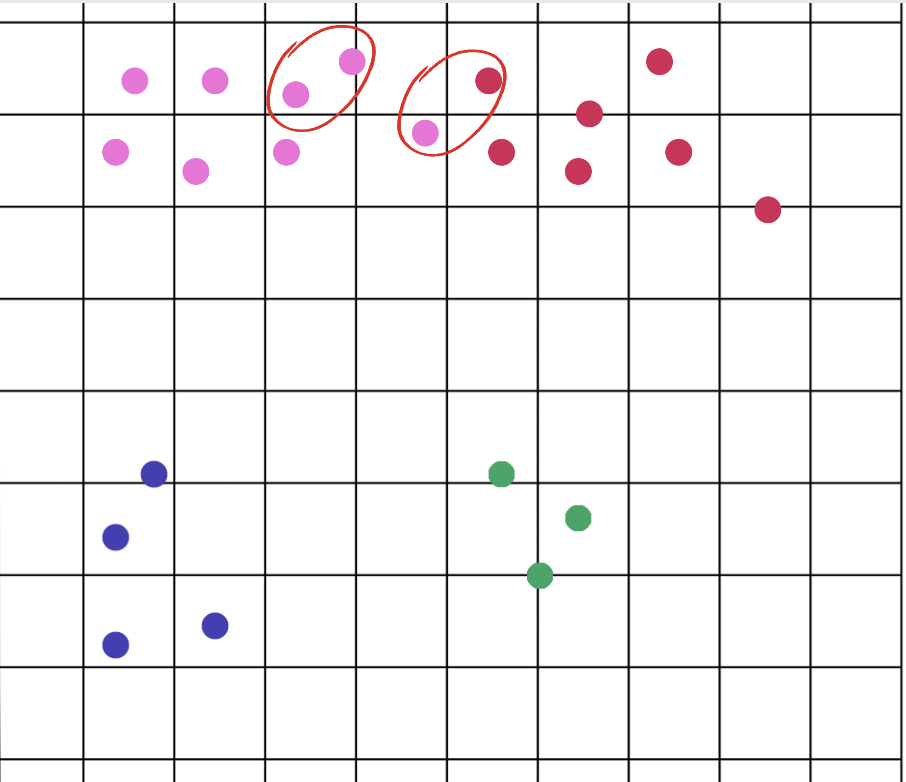

K-Vecinos Más Cercanos (KNN)

KNN es un algoritmo versátil utilizado tanto para clasificación como para regresión. Predice el valor o la clase de un punto de datos en función del voto mayoritario o el promedio de sus 'K' vecinos más cercanos. La belleza de KNN radica en su simplicidad y efectividad, especialmente en aplicaciones donde la relación entre los puntos de datos es un predictor significativo de su clasificación.

Árboles de Decisión

Los árboles de decisión dividen los datos en ramas para representar una serie de caminos de decisión. Son intuitivos y fáciles de interpretar, lo que los hace populares para tareas que requieren claridad sobre cómo se toman las decisiones. Aunque los árboles de decisión son poderosos, son propensos al sobreajuste, especialmente con datos complejos.

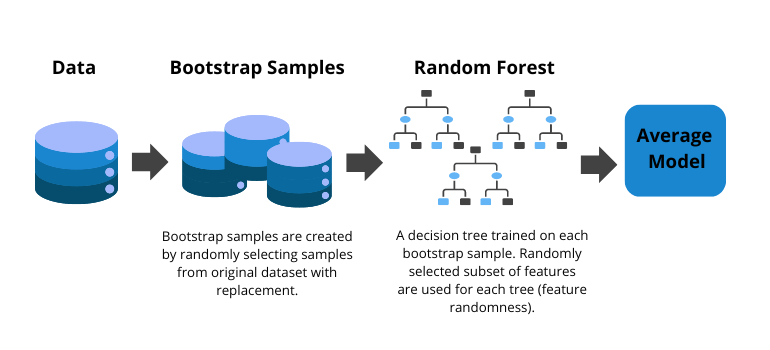

Bosques Aleatorios

Los Bosques Aleatorios mejoran los árboles de decisión al crear un conjunto de árboles y agregar sus predicciones. Este enfoque reduce el riesgo de sobreajuste, lo que conduce a modelos más precisos y robustos. Los Bosques Aleatorios son versátiles, aplicables tanto a tareas de clasificación como de regresión.

Árboles de Decisión Aumentados por Gradiente (GBDT)

GBDT es una técnica de conjunto que mejora el rendimiento de los árboles de decisión. Al corregir secuencialmente los errores de los árboles anteriores, GBDT combina aprendices débiles en un modelo predictivo fuerte. Este método es altamente efectivo, ofreciendo precisión tanto en tareas de clasificación como de regresión.

Agrupamiento K-means

El agrupamiento K-means agrupa puntos de datos según la similitud, una técnica fundamental en el aprendizaje no supervisado. Al particionar los datos en K clústeres distintos, K-means ayuda a identificar agrupaciones inherentes dentro de los datos, útiles en la segmentación de mercados, detección de anomalías y más.

Análisis de Componentes Principales (PCA)

PCA es una técnica de reducción de dimensionalidad que transforma un gran conjunto de variables en uno más pequeño que aún contiene la mayor parte de la información del conjunto grande. Al identificar los componentes principales, PCA simplifica la complejidad, lo que permite obtener análisis más claros y cálculos más eficientes.

Concluyendo

Los algoritmos de aprendizaje automático son los motores que impulsan los avances en IA y ciencia de datos. Desde predecir resultados con regresión lineal hasta agrupar datos con agrupamiento K-means, estos algoritmos ofrecen un conjunto de herramientas para resolver una amplia variedad de problemas. Comprender los principios fundamentales detrás de estos algoritmos no solo desmitifica el aprendizaje automático, sino que también abre un mundo de posibilidades para la innovación y el descubrimiento. Ya seas un científico de datos experimentado o un entusiasta curioso, el viaje al mundo de los algoritmos de aprendizaje automático es tanto fascinante como inmensamente gratificante.

Para simplificar tu trabajo con la IA, hemos desarrollado Next Brain AI, equipado con algoritmos preconstruidos para extraer sin esfuerzo información de tus datos. Programa una demostración hoy ser testigo de cómo puede empoderarte en la toma de decisiones estratégicas.

+34 910 054 348

+34 910 054 348 +44 (0) 7903 493 317

+44 (0) 7903 493 317